Fentanyl Almost Killed Michael Brewer. Now He Wants Snap to Pay

A lawsuit arguing that Snapchat helped dealers sell children deadly counterfeit drugs could change the internet as we know it.

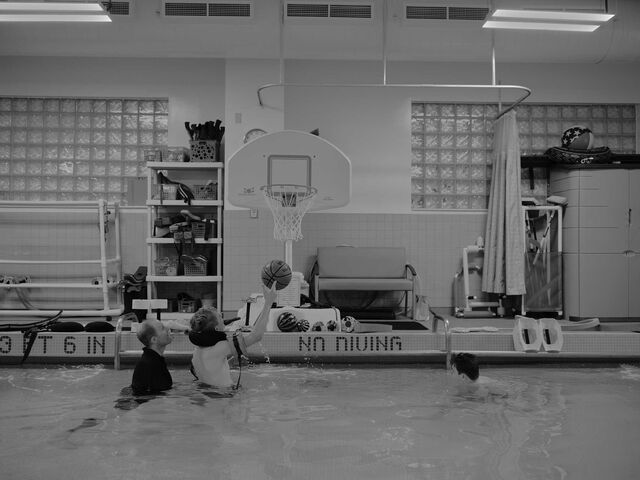

“I’m a survivor, and that’s bad for you, CEO of Snapchat, because, uh, uh, uh …” Michael Brewer can’t finish his sentence. The teenager’s speech is slow and slurred, interrupted by an involuntary gag reflex as his tongue slides down his throat—a symptom of a brain injury caused by fentanyl poisoning.

It’s a muggy August afternoon in Jacksonville, Florida. Michael’s legs are strapped into a stationary exercise bike at a rehabilitation facility where he’s learning to regain control of his body and, he hopes, to walk again. Eminem pumps through his headphones. Rain pelts the windows, but Michael can’t see it, because the fentanyl also left him blind. He swallows, breathes and tries again: “I survived, and that’s bad for you, Snapchat, because I’m talking on the record.”

Michael isn’t referring only to his interview with a Bloomberg Businessweek reporter. He’s also a star witness in a lawsuit 64 families are pursuing against Snap Inc., alleging that the company’s Snapchat app helped fuel an epidemic of teen overdoses. At 13 he connected with a dealer he met on Snapchat and bought a pill that, unbeknownst to him, was laced with fentanyl. He took it, blacked out and stopped breathing. Now 17, Michael is a star witness because he’s one of only two teens in the case who can describe what happened firsthand. All the other kids are dead.

Since 2020 drug overdoses, driven by fentanyl, have become one of the leading causes of death for teens in the US, killing more than 1,600 each year. The Drug Enforcement Administration has stressed the role social media is playing in the crisis, saying criminal drug networks are “now in every home and school in America because of the internet apps on our smartphones.”

Although drug sales can be found on all social media platforms, teens, lawyers and law enforcement officials have singled out Snapchat, the yellow app with the ghost logo, whose 850 million monthly users send billions of messages every day. The DEA has linked some deaths to Snapchat, and the FBI is investigating the platform’s role in the spread and sale of fentanyl-laced pills as part of a broader probe into counterfeit drugs.

Snapchat’s popularity is based on its automatically disappearing messages. It thrives on evanescence, unlike Instagram and Facebook, which retain every message or photo sent and only recently began offering the option of vanishing messages. Snapchat users can be silly, or naughty, because everything disappears within 24 hours by default. The app attracts minors who want to hide things from their parents, and drug dealers who want to hide evidence from police.

Snap says its disappearing messages are meant to mimic the ephemeral nature of real-life conversations, not to enable crime. “We are deeply committed to the fight against the fentanyl epidemic, and our hearts go out to the families who have suffered unimaginable losses,” says Jacqueline Beauchere, Snap’s global head of platform safety. The company says it’s spent hundreds of millions of dollars developing technology to detect dangerous content and wants to become the most hostile place on the internet for dealers. It removed 2.2 million pieces of drug-related content in 2023 and locked more than 700,000 dealer accounts.

Inside the courtroom, Snap makes a different case for itself. There, the company says it shouldn’t be blamed for the fentanyl crisis because, like a phone provider, it isn’t responsible for what’s said on its platform. The families’ lawsuit should be thrown out, Snap argues, because Section 230 of the 1996 Communications Decency Act immunizes it from liability. Social media platforms have long cited this controversial provision to assert that they can’t be held responsible for what their users say or do, and courts have agreed.

But a novel legal theory is starting to crack that immunity shield. Over the past three years, more than 1,000 lawsuits have been filed in the US against social media companies, alleging that they’ve harmed children in myriad ways, including addicting them to screens, connecting them with sex offenders and promoting suicide content. The cases argue that the platforms are dangerous products and that the companies building them should be held accountable when they cause harm. It isn’t about the platforms’ content, they say; it’s about the design. Some judges are proving receptive to the argument.

The 64 families in the Snapchat case allege that its design is responsible for connecting teens to the drug dealers who poisoned them with fentanyl. The app, they say, recommended kids befriend dealers, allowed those dealers to send them unsolicited drug menus (weed, addy, perc—$20 a pop), provided geolocation mapping tools to help with the handoff and then deleted all the evidence. The families’ lawsuit has gotten further than most. On Dec. 5, an appeals court in California denied Snap’s second request to dismiss, and now the plaintiffs could be in for a yearslong legal battle that goes all the way to the US Supreme Court. A win would upend a law that has shielded tech companies for decades, radically changing our digital world.

That’s why Michael decided to sue Snap, he says: “I want to use what happened to me to set an example, so all the social media companies can’t hide behind Section 230.”

Snap was founded in 2011, at a time when social media was typically used to share news and photos with friends and family. Rival apps opened to a digital feed that helped you stay in the loop about your connections’ birthdays and vacations, but Snapchat opened with the camera pointed at your face. Go on, it nudged: Selfie away, and don’t worry if you don’t look perfect or can’t think of something witty to say—everything disappears anyway. Users loved it, especially teens. A 2024 Pew Research Center survey found that 55% of US kids age 13-17 were on the app.

As social media platforms evolved into digital empires, the competition to retain users grew fierce. The industry shifted toward algorithmically driven feeds designed to keep people consuming content nonstop and connecting to more strangers who could provide it. Soon, predators were following child influencers on TikTok, scammers were blackmailing high school kids on Instagram—and drug dealers were hawking pills to teens on Snapchat. The app’s disappearing messages, the National Crime Prevention Council said last year, created “a digital open-air drug market allowing drug dealers to market and to sell fake pills to unsuspecting tweens and teens.”

In 2020, the first year of the Covid-19 pandemic, 1,630 teenagers died from overdoses in the US, almost double the number in 2019. The figure jumped 10% the next year. The rise wasn’t driven by increasing drug use among teens, though. The drugs had just become deadlier and more accessible. Pills sold as opioids or stimulants were being laced with fentanyl, and they were being delivered to kids’ doors.

Drug Overdoses Up Among US Teens

Deaths per 100,000 adolescents age 13-19

Fentanyl is a cheap, plentiful and highly addictive synthetic opioid that’s 100 times stronger than morphine. It can easily be produced and pressed into any pill in a black market lab, dropping the production cost while boosting the high. The DEA says that half the counterfeit pills it seizes contain enough fentanyl to kill an adult—2 milligrams, the equivalent of five to seven grains of salt.

Kimberly Fenton, a pediatric critical-care physician in Orlando, says she doesn’t see a lot of fentanyl poisonings, because kids who take the drug are usually dead before paramedics arrive. Minutes after ingesting it, breathing slows. Then, depending on the dose, it can stop completely, leading to cardiac arrest.

That’s what happened to Michael Brewer, whom Fenton treated in the intensive care unit at AdventHealth for Children hospital on Christmas Day in 2020. His younger brother, Brady, who’d just turned 12, was awakened around 3 a.m. by high-pitched, labored breathing. It sounded, Brady recalls, like someone trying to take big breaths through a straw. He walked into Michael’s room and saw him lying in bed, ghost white with blue-purple lips, “looking like he’d been strangled.” Brady ran to alert his mom.

Michael’s heart stopped four times before he reached the pediatric ICU as a code blue—pretty much dead. He’d responded to a shot of naloxone, an opioid antidote that emergency medical workers had administered, suggesting the likely culprit, but a toxicology screen didn’t show any painkillers in Michael’s urine. When Fenton assessed him in the ICU, she lifted his eyelids and saw that his pupils looked like pinpoints. She suspected fentanyl, which causes the eyes to constrict and doesn’t show up in routine toxicology tests because it’s synthetic. So she gave him another shot of naloxone. His pupils dilated and his heart rate surged, confirming her suspicion.

A few hours later, Fenton told Michael’s mother he’d been poisoned by fentanyl. “We got into his phone,” she recalls his mom saying. “He got it through social media”—specifically, Snapchat.

Two months after Michael’s overdose, Snapchat came under scrutiny when Sammy Chapman, a 16-year-old in Santa Monica, California, purchased a fentanyl-laced pill and died. His mother, Laura Berman, a relationship therapist and television host, posted about it on Instagram, bringing national attention to the issue. “My beautiful boy is gone,” she wrote. “I am not sure how to keep breathing. We watched him so closely. Straight A student. Getting ready for college. He got the drugs delivered to the house. Please watch your kids and WATCH SNAPCHAT especially. That’s how they get them.”

It had been known inside Snap since at least 2019 that drug dealers liked using the platform because of its disappearing messages, according to internal company emails cited in a lawsuit filed by New Mexico Attorney General Raúl Torrez in state court in September. His suit, like the families’, alleges that Snapchat’s design features are dangerous and have harmed minors. Snap said in public statements that it shares Torrez’s concern about online safety for young people and is committed to making the platform safer. It also filed a motion to dismiss the case, citing Section 230.

The emails were damning for Snap’s chief executive officer, Evan Spiegel, who became one of the world’s youngest billionaires after co-founding the company at age 21. In the messages, many of which were sent between 2019 and 2022, former workers with Snap’s trust and safety department complained about senior leaders ignoring them. “There was pushback in trying to add in-app safety mechanisms because Evan Spiegel prioritized design,” one wrote. The employees voiced concerns about Snapchat facilitating sextortion, about unwanted contact between kids and adults—and, in repeated emails, about the sale of drugs.

Some said Snapchat’s Discovery feed, a stream of content that the app promoted, was recommending posts by drug dealers, including menus and prices. Others shared media reports saying Snapchat’s Quick Add feature, which suggests new friends, was helping connect dealers with buyers. A 2019 presentation by a security company advising Snap, cited in the lawsuit, included a stark warning: “It takes under a minute to use Snapchat to be in a position to purchase illegal and harmful substances.” (The company says it can’t comment about internal records because of the pending litigation.)

At the time, Snap had rules in place banning users from trying to buy or sell illicit drugs, and it blocked related search terms, but the company acknowledges it wasn’t proactively looking for such content and relied instead on user reports. Given that dealers and buyers weren’t likely to self-report, Snap didn’t know how prevalent the problem was. That changed with fentanyl.

In February 2021, the same month Sammy Chapman died, Snap’s global head of public policy, Jennifer Stout, was working remotely from her home outside Washington, DC, when she received an email about another fentanyl death involving Snapchat. She recalls being shocked by the pattern that appeared to be emerging. “As a mom to teens myself, it felt very personal,” Stout says. “It felt like it could happen to my kids, it could happen to my neighbors.” She’d seen stories of teen overdoses linked to counterfeit pills and social media, and now she began connecting the dots about the crisis. “It was happening all around us,” she says. “I wanted to just, you know, scream and say, ‘Do people understand what is going on?! Do others see what we are seeing?’”

Within weeks, the issue became a top priority at Snap, designated “code red.” The company set aside other projects and shifted resources to combating fentanyl sales on its platform. Engineers deployed filters to detect drug-related content and identify accounts that posted it. The first step was training the machine-learning model to decode teen drug slang. Some terms were intuitive, like percs for Percocet or addy for Adderall. Emojis were not: a plug 🔌 meant dealer; a shopping cart 🛒 meant THC cartridges; a gas pump ⛽ meant the good stuff.

That April, Snap arranged a Zoom call with about a dozen families who’d lost children to fentanyl. A former State Department official with two decades’ experience in government, Stout was trying to better understand the crisis. The DEA hadn’t issued any warnings, and health agencies were overwhelmed by Covid. “We needed to immediately learn more about what was happening, both in society but also on our platform,” Stout says.

The families took turns telling their stories. They were eerily similar. A teen looking for an escape from pandemic anxiety, boredom or loneliness purchases a pill through Snapchat. The pill contains fentanyl. The parents find their children dead the next morning. Stout recalls crying as she heard the stories. After the parents spoke, a safety representative from Snap said its law enforcement operations team was understaffed and vowed to fix the problem, according to people who were present.

Despite the promises, most of the parents left the call angry. Amy Neville was among them. Her 14-year-old son, Alexander, had died the previous summer in Orange County, California. One afternoon in June 2020, he’d confessed to his parents that he’d bought what he thought was an oxycodone pill from a dealer on Snapchat and was scared because he wanted to keep taking the drug. Neville called a treatment center for help, and it said it would call back the next day. But when she went to Alexander’s bedroom to wake him the following morning, she found him on his beanbag chair, cold and stiff.

Months later, one of Alexander’s friends told Neville she knew who’d sold him the pill. She showed Neville a Snapchat account with the username AJ Smokxy. Neville sent the information to the DEA and waited for a response while AJ Smokxy went on selling counterfeit pills.

By the time Neville joined the Zoom call with Stout, AJ Smokxy was under investigation for allegedly playing a role in two deaths. Neville says she listened to the company’s comments with growing rage.

Snap’s subsequent efforts to combat fentanyl and its press releases touting new safety measures did little to quell her anger. To her, it was lip service, because kids kept dying. She started coordinating with other parents, including Sammy Chapman’s mother and father, who’d complained to Spiegel in a phone call and a subsequent email that the company wasn’t doing enough to help police catch drug dealers. Spiegel’s reply was later cited in legal filings. He said the company works with law enforcement to bring criminals to justice but added, “I understand that you feel we can do better and I agree.”

In June 2021, Neville says, she and other parents decided it was time to “get loud.” Dozens of them marched to Snap’s headquarters in Santa Monica, holding photos of their dead children and chanting, “Gone in a Snap!”

That year, according to documents cited in the New Mexico case, the company deleted 1 million pieces of drug-related content and deactivated the accounts of 500,000 dealers. One internal message noted, “We see an average of about half a million unique users exposed to drug-related content every day.” Snap also switched its settings in October 2021 so users who searched for illicit drug content received substance abuse and mental health information. For many of the parents whose children had died, the improvements weren’t enough.

Neville says she’d been told by some parents that she couldn’t sue Snap because of Section 230. But as the first anniversary of her son’s death came and went, she had a revelation: “I can’t sue Snapchat and win. But I’m happy to sue Snapchat and cause a scene.”

In October 2022, the Nevilles and eight other families sued Snap in Los Angeles Superior Court, alleging that Snapchat is a dangerous product, responsible for the majority of US fentanyl deaths linked to social media. (The company declined to comment on this assertion.) “This lawsuit is not about children having access to drugs, it’s about drugs having access to children,” an amended complaint says.

Laura Marquez-Garrett, a lawyer with the Social Media Victims Law Center in Seattle and the co-lead attorney on the case, has been investigating fentanyl overdoses for longer than two years. She says she’s spoken to the parents of more than 100 kids who died, ultimately leading 64 families to join the fight. “These are all children who made mistakes and didn’t deserve to die for them,” she says.

The case rests on a so-called product liability argument, a staple of tort law that requires plaintiffs to prove that a product caused the harm they’re suing over. Winning on these grounds against a company whose platform is protected by Section 230 was almost unheard-of. It would be even harder to do without a victim’s firsthand account. The parents didn’t know precisely how their children had connected with the dealers or how the sales had been arranged. Even if they’d managed to unlock their kids’ phones, the evidence had disappeared.

What the attorneys needed was a child who’d survived being poisoned by a fentanyl-laced pill purchased through Snapchat and wanted to testify. They needed Michael Brewer.

Michael was 11 when he downloaded Snapchat. That’s two years earlier than the app’s minimum age, but social media companies don’t enforce these restrictions. He says he just changed his date of birth, and—bingo—he was in.

Soon, he was using Snapchat four hours a day, trying to find ways to pump up his Snapscore, which shows how active users are on the platform. The higher the number, the greater the bragging rights. One way to boost the score is by adding friends. Snapchat’s algorithm helps with that through Quick Add, which sends friend recommendations based on shared connections and locations. Michael accepted every Quick Add suggestion Snapchat offered: Swipe, accept, score boost; swipe, accept, score boost.

One day, he said during a recorded deposition, he added someone whose username was MaryJane with a tree 🌳 and a pill 💊 emoji. “What do you want?” his new friend asked. “What do you mean?” Michael replied. Then a photo came through. It was a drug menu advertising marijuana, THC cartridges, Adderall and Percocet, dropped off at your front door.

Michael was curious about drugs and looking for an escape. The year 2020 had been difficult for him, beyond normal preteen angst. His father had just been sentenced to five years in federal prison for bank fraud and money laundering. A few months after he left, the pandemic shut down Michael’s school. For a kid with boundless energy, one who liked to show off by hurling a football 40 yards with what he calls “pinpoint accuracy,” being confined to his bedroom for Zoom school was suffocating.

He first tried THC cartridges, then moved to mushrooms and prescription pills. He liked Adderall the most. To pay for the drugs, he’d take his mom’s debit card and run to the nearest ATM at midnight to get cash. He’d meet the dealers around the corner from his house.

The only app he ever used to buy drugs, he says, was Snapchat. He recalls receiving drug menus through the app “every hour of every day.” Because the messages delete by default, he knew he wouldn’t get caught even if his mother checked his phone. And the bank card withdrawals were small enough to not set off alarm bells. When his mother did ask, he denied using the card.

A lot of his friends bought drugs on Snapchat, too, he says. Sometimes, on weekends, they’d take them together and watch a movie, because there wasn’t much else to do. One friend, Nikita Shapialevich, who’s now 18 and says he never used drugs, recalls regularly receiving Quick Add recommendations for dealers. “They start offering you, like, ‘Hey, buy this,’” he says. “And it’s honestly very tempting, especially at that young age, when it’s, like, right there. When they say, ‘I’ll come over, I’ll give it to you,’ it’s kind of hard to refuse.”

On Christmas Eve 2020, Michael says, he accepted a Quick Add for a user whose name included a pill 💊 and a mushroom 🍄 emoji and who was posting drug menus in their public stories feed. When Michael asked the dealer for Adderall, he was offered Percocet, an opioid painkiller. He’d never tried Percocet but agreed to pay $20 for two pills.

Around 11 p.m., the dealer messaged Michael on Snapchat to let him know he was outside. Michael climbed out his bedroom window. He recalls that the guy looked straight out of a low-budget ’90s film, with a hoodie, dreadlocks and gold grills. As Michael slunk back to his open window, the dealer said, “Good luck, man.”

Michael remembers swallowing one of the pills, but not much else from that night. The next thing he recalls is having a vivid dream where he was in a submarine going deeper and deeper until it “crashed into the bottom of the ocean.”

Doctors fought to keep him alive for five hours. Around the time he finally stabilized, his brother, Brady, managed to unlock his phone. He wanted to figure out what Michael had taken, in case it would help. Brady knew his brother had used Snapchat to buy marijuana and Adderall, and when he opened the app, he saw that the username of the last person Michael had messaged with had a pill and a mushroom emoji in it. The conversation was gone, but the emojis were telling enough. Brady showed his mother.

Five days later, while Michael was still on life support, his mother sent the dealer a message, asking him for more of what he’d sold last time. Then she called the police in the Orlando suburb of Winter Park, where she and the boys were living. The man who’d given her son fentanyl was on his way to her house, she said.

Lieutenant Joseph DiCarlo sped over and saw the dealer standing on the family’s front doorstep. He was arrested on the spot, with five and a half pills in his pocket. At the station, he admitted he’d sold drugs to Michael at the same address a few days earlier. His name was Luis Molina, and he was 21. He was released two days later after paying a $100 bond, according to police records.

The case was passed to prosecutors, but the evidence was thin. The hospital had never screened Michael for fentanyl, so its presence in his body wasn’t confirmed, and the pills Molina had been carrying were antianxiety meds, not opioids. DiCarlo filed a search warrant to Snap for records relating to Molina’s account, but he says he didn’t get any messages between the dealer and Michael.

Molina ended up cutting a deal. He pleaded no contest to possession of a controlled substance with intent to sell and was sentenced to 36 months of supervised probation.

The news infuriated Michael’s father, Bryan, who’d been following the developments from a federal prison in Petersburg, Virginia. A Marine veteran, Bryan couldn’t understand how he’d been sentenced to five years in prison for a white-collar crime while the man he blamed for almost killing his son spent two nights behind bars then got out on probation.

Bryan decided that if his family wasn’t going to get justice in the criminal case, he’d take the fight to Snap. He went to the prison library and started researching who could help. He soon came across the Social Media Victims Law Center.

The center filed Brewer v. Snap Inc. in April 2023, alleging—as it had in Neville’s case, with which Michael’s and those of other families were later combined—that Snapchat’s design is dangerous and that Snap should be held accountable. The company has “attempted to convince multiple grieving parents that it is immune from liability because of Section 230,” the complaint says. “Snap and its leadership are mistaken.” A spokesperson for Snap says the company can’t comment about Michael’s allegations.

The Social Media Victims Law Center was founded after a federal appeals court in California helped crack the immunity shield protecting social media companies from product liability claims. The parents of two teenage boys who’d died in a 2017 crash in Walworth County, Wisconsin, had sued Snap for negligence. Minutes before the boys’ car careened off the road, smashed into a tree and burst into flames, they’d been using Snapchat’s speed filter, a feature that measured how fast a user’s phone was traveling. Rumor at the time held that the platform would boost your Snapscore if you exceeded 100 miles per hour (which Snap says was never true). The boys clocked 123 mph before they lost control of the vehicle.

Lawyers for the boys’ parents filed one of the first product liability cases against a social media company. Snap moved to dismiss, citing Section 230. A district court judge agreed, but in May 2021 an appeals court overturned the ruling. Section 230 didn’t immunize Snap, the appellate judges said, because the company was being tried as the manufacturer of a product, not as a host for third-party content. In other words, the issue was Snapchat’s design.

Snap removed the speed filter in the months after the ruling, and the case was settled out of court in September 2023. But Lemmon v. Snap Inc. had established a new interpretation of a law that courts had treated as practically sacrosanct dating to the dawn of the internet era.

Section 230 was part of a bill passed in 1996, and it aimed to immunize builders of the still-developing internet. Prior to that, online messaging and bulletin boards faced what was called the “moderator’s dilemma”—courts had ruled that these platforms were liable for the content posted on their sites only if they tried to regulate it. That left tech companies with an impossible choice: Remove unwanted or unsavory content and expose themselves to liability claims, or moderate nothing and let their fiefdoms turn into cesspools.

Section 230 treated these providers as more akin to phone companies than to traditional media publishers that can be sued for what they print or broadcast. “No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider,” it reads. Section 230 also protected internet companies from lawsuits seeking to restrict material that complainants deemed obscene, violent or otherwise objectionable. The idea was to encourage platforms to self-regulate by moderating content without fear of being sued.

Over the next quarter-century, courts fortified Section 230. After someone posted defamatory and personal information about another user on America Online in 1995, a judge said the platform wasn’t liable. After a 14-year-old girl was sexually abused by a predator she met on MySpace in 2006, the platform wasn’t liable. After a young man overdosed on heroin bought through a social network called Experience Project in 2015, the platform once again wasn’t liable. Some critics began referring to Section 230 as a get-out-of-jail-free card.

The provision has been hotly debated. Some Democrats in Congress want to tweak it so platforms are forced to remove harmful content faster and held accountable when they don’t. Some Republicans want platforms to have less power to censure or remove third-party content, citing concerns about free speech. The political stalemate has caused dozens of proposed amendments to fail, leaving Section 230’s original wording largely unchanged, even though it was written for a pre-iPhone, pre-Facebook world.

Back in the mid-1990s, Julie Inman Grant was working in Washington for Microsoft Corp; she helped shape Section 230, so she knows its history intimately. Now the commissioner of eSafety, Australia’s independent internet-regulatory body, Inman Grant says the shield for online platforms needs to be updated. “None of us in the industry,” she says, “would have dreamed that this immunity provision would have remained untouched for 28 years, given how much the industry has changed and how much harm technology with no care, responsibility or guardrails has wrought on humanity.” Inman Grant says it’s been encouraging to see “the cracks appearing in this defense.”

When the federal appeals court issued its ruling in Lemmon v. Snap, an attorney in Seattle noticed. Matthew Bergman, who’d spent decades litigating product liability cases against asbestos manufacturers and won more than $1 billion for his clients, decided to pivot to social media. He started the Social Media Victims Law Center with one mission: to use the product liability argument to hold companies accountable for harming kids.

In three years, the center has filed 700 cases on behalf of more than 3,000 families against Meta, Snap, TikTok, YouTube and others. It also helped start a national movement. More than 200 school districts and three dozen state attorneys general have filed lawsuits similar to the center’s. Some of those cases have been consolidated into multi-jurisdictional proceedings to streamline the pretrial discovery process, while others, including the one the 64 families are fighting against Snap, are moving forward on their own. The novel legal argument underpinning the suits has also emboldened families outside the US—in November, TikTok became the first platform to be sued for product liability in the European Union.

US courts delivered a series of rulings in 2024 that further chipped away at Section 230. In January, Lawrence Riff, a superior court judge in Los Angeles, ruled against Snap’s request to dismiss the case brought by Neville, Michael and the other families. Without judging the merits of the case, Riff wrote that numerous design decisions could have contributed to the overdose deaths, including ineffective age verification, lack of parental controls and a Quick Add feature “that facilitates drug dealers’ targeting of minors.” The families could proceed to discovery, he said. (In December a state appeals court denied Snap’s request to appeal, which means the company will have to start handing over internal documents and submit to depositions under oath, unless it manages to get the case before the Supreme Court.)

A federal appeals court in Pennsylvania issued a similar ruling in a case involving TikTok in August, saying a mother’s lawsuit over the death of her 10-year-old daughter, Nylah Anderson, could proceed. The court said TikTok wasn’t immunized by Section 230 after its algorithm served up a “blackout challenge” video to the girl, who then strangled herself while imitating it. TikTok hadn’t just published the video, the court ruled; the algorithm had promoted it. (A request by TikTok to reconsider was denied. The company has until January to appeal to the Supreme Court.)

In October a judge in California largely denied motions to dismiss more than 900 federal cases brought against Meta, Snap, TikTok owner ByteDance, YouTube and others. The cases, which include hundreds filed by the Social Media Victims Law Center as well as ones brought by school districts and state attorneys general, allege that the platforms were designed to addict minors, engaged in deceptive marketing and neglected to warn users about safety risks. The companies had sought to have the cases dismissed on Section 230 grounds, but the judge has kept them moving toward discovery. (Some of the companies have appealed.)

Eric Goldman, a law professor at Santa Clara University who’s been an outspoken critic of the movement to hold social media companies liable for user-generated content, casts some of the recent court decisions in favor of plaintiffs as “bonkers” and accused Riff in a blog post of ignoring decades of binding precedent. “I’m sure the judge was stone-cold sober when he wrote this, but it’s the kind of statement I might expect if someone wrote a judicial opinion while tripping on acid,” Goldman wrote. The courts are ignoring that “gathering, organizing and disseminating” third-party content are, at their core, publication choices protected by Section 230, he says. “What does social media do? It publishes content.”

Daphne Keller, director of Stanford University’s Program on Platform Regulation, says she’s concerned that, without Section 230’s protections, platforms would either cease moderating entirely, leaving vile and violent posts visible to kids, or would overcensor and become so Disneyfied that they limit movements such as #MeToo and Black Lives Matter. “Both feel like problematic outcomes,” she says.

Snap agrees. “Section 230 is an important legal safeguard that encourages and protects our ability to screen and remove illicit drug-related content posted on our service,” a company spokesperson says.

Still, many in the industry say social media is approaching a moment like the one car companies faced under pressure from consumer advocates in the 1960s. “Social media platforms, as they are currently, are like a car without a seat belt,” says David Polgar, founder of the nonprofit All Tech Is Human, which seeks more responsible development of digital technologies. In Polgar’s view, safety will always be regarded by corporations as a killer of innovation. “You want these companies to be able to innovate without being so regulated it causes them to go out of business,” he says. “However, you balance that against the societal need of saying we want less deaths associated with the product. Right now, that balance is out of whack.”

The pressure on social media platforms isn’t coming only from lawsuits. Australia recently passed legislation forbidding the use of social media by kids under 16. The UK and European Union have rolled out new laws intended to make online spaces safer by holding tech companies accountable for user harms. And some US states have banned smartphones in schools.

CEOs including Snap’s Spiegel have been hauled before Congress to answer for the repeated deaths of kids using their platforms. The Senate voted 93-1 in July to pass the Kids Online Safety Act, which would require companies to put children’s well-being first when designing potentially addictive features. But the measure has stalled in the House, with Republican leaders saying it could lead to censorship. In the face of these pressures, many social media companies have spent millions of dollars on lobbying against KOSA and on public-relations campaigns highlighting their platforms’ popularity and their importance as vehicles of free expression and creativity.

Snap has taken a different approach to the pending legislation. In early 2024 it broke ranks with its industry trade group to support KOSA, saying its safeguards already align with the proposed bill. The company has doubled the size of its safety team since 2020 and tripled its law enforcement operations staff, though it won’t provide head counts. Snap says its machine-learning models now detect and block 94% of drug-related content before it can be reported by users. It has added additional safety layers to make it difficult for strangers to contact teenagers, and it sends pop-up warnings about dangerous content such as drugs. The company says users under 18 can now get Quick Add recommendations only from people who are in their phone contacts or with whom they share several mutual friends. Since 2022 parents have been able to see who their children are friends with on the app.

And after being criticized by some law enforcement officials for taking months to respond to search warrants, Snap says it now replies to 98% of these requests within the specified time. It also hosts meetings to coach law enforcement officials on how to write effective search warrants to obtain IP addresses and material users have chosen to save, if not deleted messages. The millions of pieces of drug-related content Snap detects are now retained to help criminal prosecutions, and the hundreds of thousands of accounts it deactivates for posting drug content are blocked at the device level, preventing dealers from creating another account if they’re banned.

Seven current and former employees who spoke to Businessweek on condition of anonymity to discuss confidential matters expressed pride in how far the company has come. But some say they hope the lawsuits push Snap to return to what made its app popular in the first place: connecting family and friends, not strangers. Maybe if these cases proceed to trial, two of the ex-employees say, Snap will be forced to turn off its Quick Add recommendation engine for minors. The feature has been questioned inside the company for years, the internal emails uncovered by New Mexico’s attorney general show. Shutting it down altogether might hamper growth, the two former employees acknowledge, but it would also make the platform safer, as kids would only be snapping with people they know in real life.

At least one grieving family is satisfied with what Snap is doing to improve the situation. They’ve chosen to work with Snap rather than join a lawsuit. Ed and Mary Ternan, whose son died after taking a fentanyl-laced pill purchased from a dealer he connected with on Snapchat in 2020, set up a charity called Song for Charlie to educate youth about the problem. They decided to meet kids where they were—on Snapchat. Their charity, which lists Snap as a partner, has reached tens of millions of teens, according to Ed Ternan, who also sits on the company’s safety advisory board. He declined to disclose how much money Snap has donated, but he says its safety efforts are working.

Ternan calls the lawsuits counterproductive. “Our goal is not litigating the past death, but preventing the next death,” he says. “No other social media or tech company is doing more to address the fentanyl crisis and its impact on American youth than Snap.”

Despite Snap’s efforts, kids keep dying of overdoses—almost 1,700 in the US in 2023, according to the Centers for Disease Control and Prevention. And parents keep tracing the deaths to fentanyl they allege was purchased via Snapchat. The death toll since the beginning of 2023 includes Cooper Root, 16, of Texas; Donevan Hester, 16, of Washington; and Nicholas Cruz Burris, 15, of Kansas.

Cruz, as Burris was known, was a high school freshman in Lansing, about 30 miles northwest of Kansas City. Late one school night in January 2023, he snorted a line of white powder on a FaceTime call with friends. A few minutes later they heard him gasping for breath, then silence. Cause of death: fentanyl.

Lansing, population 11,200, is one of those places where everyone knows everyone, and the whole town seemed to have heard about Cruz’s death by the time his body arrived at the morgue. Most of his classmates knew who’d sold him the pill, too. He’d sent screenshots of the dealer’s Snapchat account to a group thread, in case anyone else wanted a plug.

The dealer’s name was Torin Baughman. He was 18, and, in a small-town twist of fate, his younger brother had a crush on Cruz’s older sister. When word spread that Baughman was the culprit, the brother opened an app the family used to share their locations. It showed Baughman had pulled into Cruz’s cul-de-sac around 11 the previous night. Baughman’s brother sent a screenshot to Cruz’s sister, who forwarded it to her parents, who sent it to the police. But the screenshots and the geolocation data weren’t enough to press charges. Police wanted messages between Baughman and Cruz, and no one could unlock Cruz’s phone.

Cruz’s father, Andy Burris, who oversees the production of gift boxes and ribbons at a Hallmark Cards factory, appealed to the state’s attorney general for help. The case was referred to the Kansas Bureau of Investigation, which filed a search warrant to Snap, seeking records from Baughman’s account in the 24 hours leading up to Cruz’s death. Four days later, Snap provided Baughman’s phone number, date of birth and one video he’d sent Cruz of lines of powder on a phone screen that one of the teens had saved. But there were no messages. They’d all been deleted. It wasn’t enough to prosecute.

Around the one-year anniversary of Cruz’s death, a forensic analyst finally cracked the passcode and unlocked Cruz’s phone, according to an affidavit filed in the case. The analyst found a Snapchat conversation between Cruz and Baughman somehow still preserved on the device. It started with Cruz asking Baughman at 9:38 p.m. if he was selling any psychedelics, before the chat turned to the possibility of buying a 30mg Percocet pill.

Baughman:nah I got weed addy perc

Cruz:shit lemme get a perc. Never tried them. How much

Baughman:for one it’s 20. Ima tell u now they perc 30 you gotta snort it like a tiny line

Cruz:oh shit wth

Baughman:Like a small line fr too much will have you

Cruz:would you recommend it for me

Baughman:how old you is

Cruz:15. I just smoke weed and shrooms

Baughman:hmmm. Yeah imma give you one

The conversation continued, with Baughman telling Cruz the pill was “sprayed with fent” and that it’s “hella good it’ll chill u out feel like you sleeping but you awake.” At 11:10 p.m., he wrote, “pulling up now.”

It isn’t clear how the messages were preserved. Police may have switched Cruz’s phone to airplane mode when they bagged it as evidence the morning of his death, and the messages, set to delete after 24 hours, remained in his Snapchat app because the clock had stopped when the device was disconnected from the network. Snap says it can’t be sure why the messages weren’t included in its response to the search warrant.

In any event, it was the evidence prosecutors needed to file first-degree murder charges against Baughman. He pleaded guilty in November to distribution of a controlled substance causing death and will be sentenced in January. He faces 12 or more years in prison.

The Social Media Victims Law Center added the Burrises’ legal complaint to the Neville case in March 2023, weaving them into an expanding web of grief crisscrossing the country. Like the Nevilles in California and the Brewers in Florida, the Burrises in Kansas are readying themselves for a long battle against Section 230. They haven’t met the other plaintiffs, but they say they feel united by a shared goal: holding social media companies accountable for harming children.

“They know the algorithms they put out there, they know the code,” Andy Burris says. “And our children are vulnerable.” —With Ann Choi and Kurt Wagner

A-

A-